Artificial Intelligence and Process Safety: Understanding Paradox

This post is the third in a series to do with the use of Artificial Intelligence (AI) and Large Language Models (LLMs) within the process safety management discipline. The first two posts are:

In this post we consider the role of paradox in Process Safety Management (PSM).

Proper Names

The following quotation is often attributed to Confucius.

The beginning of wisdom is to call things by their proper name.

The term Artificial Intelligence is not the ‘proper name’ for programs such as ChatGPT, DeepMind and Claude. Specifically, LLMs are not conscious minds; they are not intelligent. They do not possess understanding, judgment, intuition, or experience. LLMs are simply software systems trained to identify statistical relationships between tokens: words, symbols, and patterns contained within enormous data sets. They are powerful tools, but they are not conscious minds.

Attributing the term ‘intelligence’ to such systems is more than misleading ― it attributes a capability to them that does not exist. This means that professionals in all disciplines need to be very cautious about allowing AI to make decisions.

Paradox

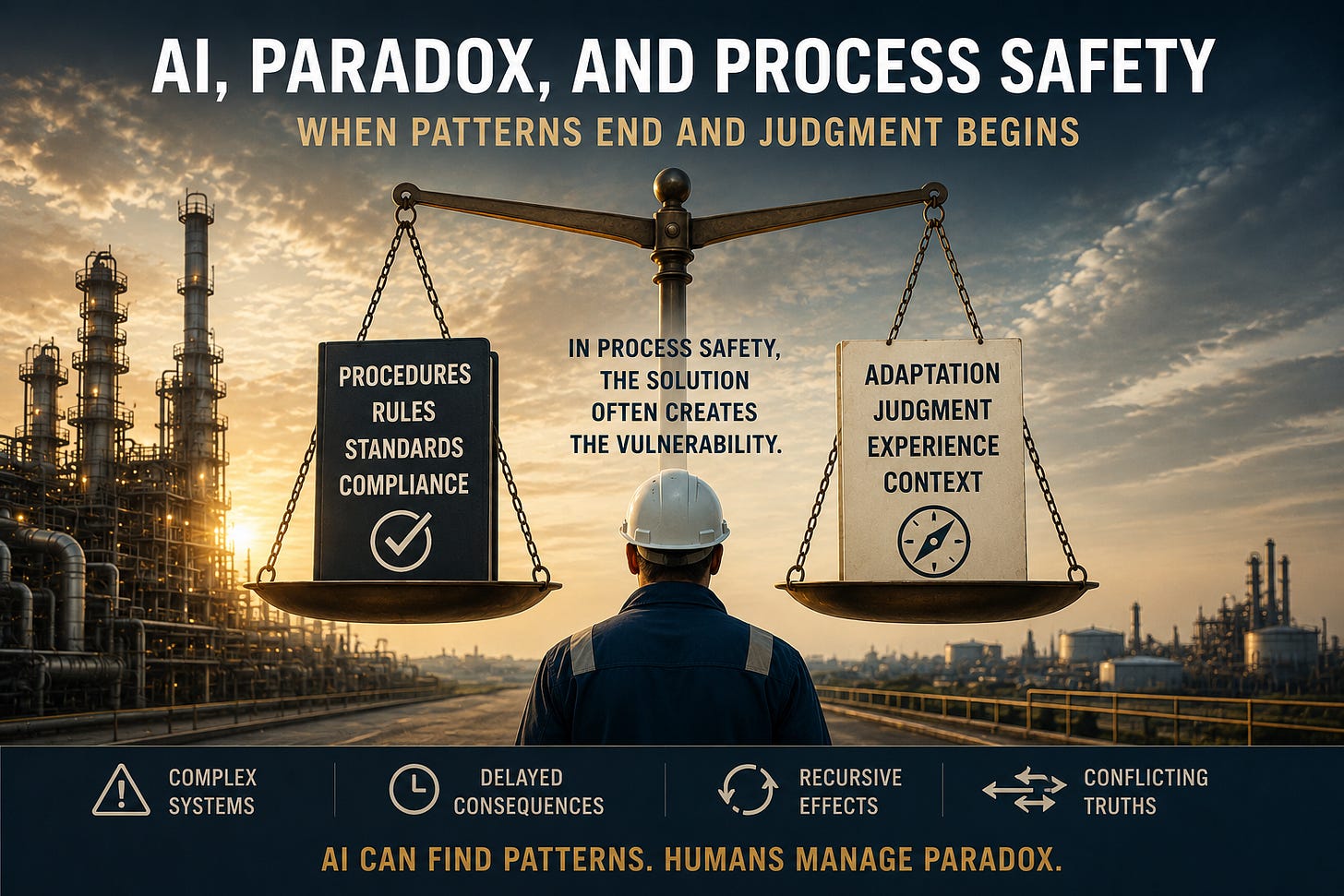

This caution applies particularly to process safety because the discipline often involves something deeper than pattern recognition.

Process safety involves paradox.

For example, many incident reports identify the lack of clear, comprehensive operating procedures as being an important contributing factor to the incident in question. The resulting recommendation is that operating procedures should be thorough, complete, accurate and up to date. The procedures should also anticipate as many operating situations as possible.

The catch is that no written procedure can describe every possible operating condition, equipment failure, weather event, utility disruption, or human interaction. Real facilities are too complex.

Therefore, safe operation requires two apparently opposing behaviors:

Strict procedural compliance,

Intelligent adaptation when reality differs from the procedure, often during an emergency.

This is more than a balancing act. The very discipline that prevents unsafe improvisation can also become dangerous if it prevents intelligent response to conditions the procedure did not anticipate.

An experienced process safety professional understands this paradox, and can work within it. An LLM, on the other hand, may have trouble responding to this type of challenge because the situation will contain multiple valid but conflicting objectives that cannot be resolved through straightforward pattern matching.

In other words,

Can LLMs handle novel conditions?

LLMs do not reason about the world in the same way humans do. They do not possess direct experience, tacit operational understanding, or physical intuition. They generate responses based on statistical relationships learned during training. This means that they are strongest when the domain is well represented in training data, the problem resembles prior examples, and the structure of the problem is stable. They are weaker when conditions are genuinely novel, physical interactions are poorly represented, or the problem requires deep understanding of context and consequence.

Bhopal

A commenter on an earlier post mentioned the chemical plant catastrophe that took place in Bhopal, India in the year 1984. An LLM could use the facts of that event to enhance its training base. But could an LLM have recognized the emerging catastrophe before the event, when the signals were fragmented, ambiguous, and embedded in day-to-day operating practice?

Intuition

Experienced operators and engineers often recognize danger before they can fully articulate the reason for their concern. They have hunches based on anomalies such as,

An unusual sound

An unexpected trend

A subtle operational inconsistency

An interaction that ‘doesn’t feel right’.

This type of judgment is difficult to formalize because it often depends on tacit understanding accumulated over years of direct operational experience. Hence, it is very difficult to incorporate hunches and feelings into an LLM’s training.

More Paradoxes

Here are some more PSM paradoxes.

Too Much Data

Modern facilities generate enormous quantities of data.

More data should improve understanding.

But excessive data can obscure critical signals.

Important weak indicators become buried.

Organizations become ‘data rich but insight poor’.

Knowledge

The more organizations know about hazards, the more they realize how much uncertainty remains.

They learn that rare interactions, abnormal conditions, organizational behavior, and human adaptation cannot be fully modeled.

Hence increased expertise often produces greater humility rather than greater certainty.

That characteristic sharply contrasts with many AI systems, which often generate responses with high apparent confidence regardless of actual uncertainty.

Conclusion

LLMs are invaluable tools for organizing information, identifying patterns, generating procedures, and reviewing large bodies of technical material. But process safety management also involves judgment under uncertainty, recognition of anomalies, and an understanding of paradoxical conditions. These capabilities remain deeply dependent on human experience and operational understanding.

Here is a final paradox: the safer a facility appears to become, the greater the risk that vigilance will decline. Long periods without incidents may create confidence, normalization, and complacency.

In other words,

Safety success can itself become a source of vulnerability.