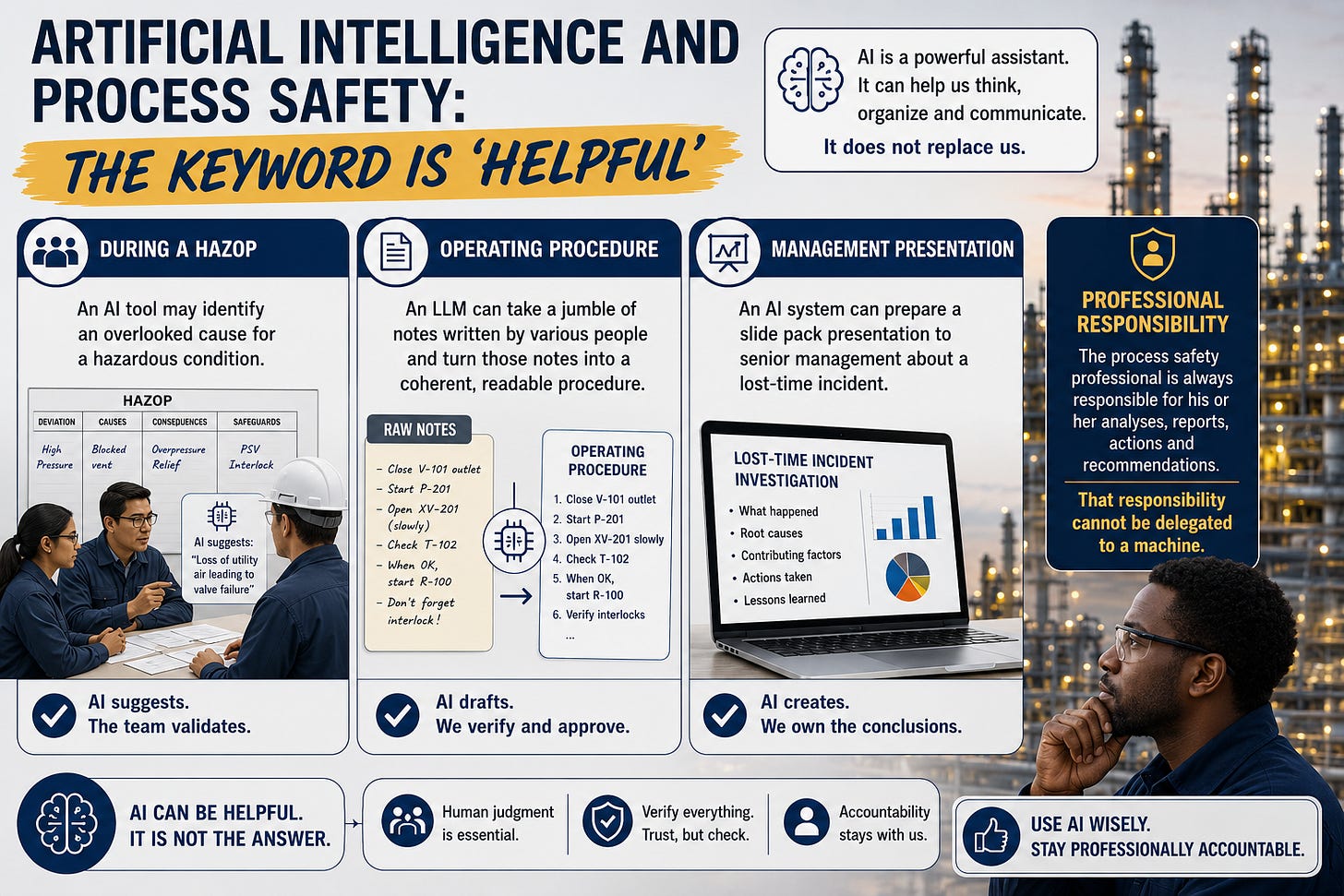

Artificial Intelligence and Process Safety: Helpful ― Not Authoritative

Like many process safety professionals I have been using Artificial Intelligence (AI) tools ― mostly Large Language Models (LLMs) ― to support my research and writing. Although AI tools are helpful (a word that crops up a lot in this post) we need to be aware of potential pitfalls, particularly when it comes to professional responsibility.

All professionals, regardless of their discipline, have to be sure of the quality and integrity of their work. But the information provided by AI systems cannot always be trusted. Not only may the information that they provide be inaccurate or misleading ― these systems may also ‘hallucinate’, i.e., generate false statements. (Strictly speaking, they do not lie because lying implies moral intent.)

So how can process safety professionals use AI without violating the principles of their discipline? After all, a mistake in an analysis or a report could lead to someone being injured or killed.

One response to this concern is that AI should be seen as a helpful tool, but not as ‘the answer’. Three AI roles are:

An idea generator

A drafting/editing assistant

An analytical support tool

This distinction aligns with the framework described in previous posts: Decision Strategy → Execution → Analysis/Reporting.

Here are examples:

During a HAZOP, an AI tool may identify an overlooked cause for a hazardous condition. But the HAZOP team must then validate that cause.

An LLM can collect a jumble of notes written by various people to do with starting a chemical reactor, and turn those notes into a coherent, readable operating procedure. But then operations management has to formally accept the new procedure (and train people in its use).

An LLM can prepare a slide pack presentation to senior management regarding a lost-time incident. But the person making the presentation had better be sure that he or she can respond to challenging questions.

The keyword in all these situations is ‘helpful’. The AI system does not replace the professional, nor does it make decisions on its own. For AI to be effective, it still needs human instructions ― for example, to do with the structure of a slide pack presentation. Moreover, the process safety professional is always ― repeat always ― responsible for his or her analyses, reports, actions and recommendations. That responsibility cannot be delegated to a machine.

To summarize: a useful test is this.

Would I be willing to sign my name to this AI-generated analysis or report if I knew that it will be reviewed by an experienced investigator, regulator, or opposing expert after an incident?

If the answer to the above question is ‘No’ then the AI output should be used with caution. It may be useful. It may be interesting. It may be thought-provoking. It may point to issues that deserve further investigation. But it is not professional work.